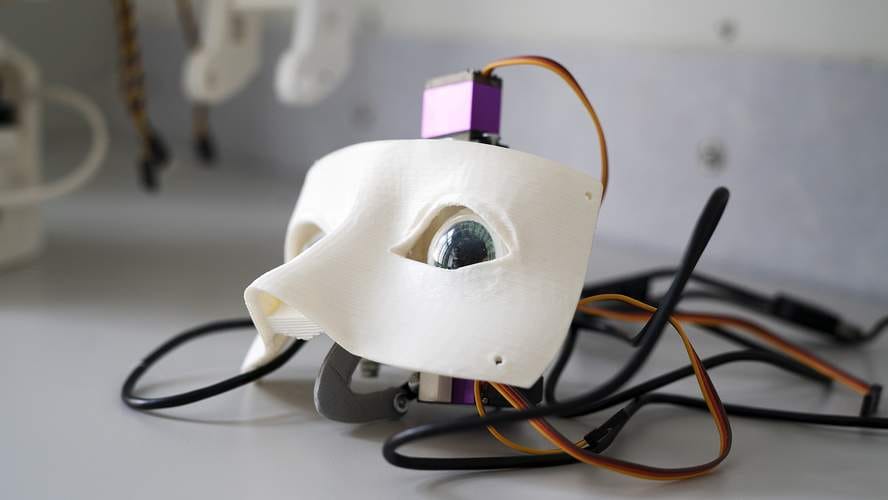

A social robot is an autonomous robot that interacts and communicates with humans by following social behaviors and rules attached to its role. We have been building one together with Helsinki Digitalents.

Our robot design of choice is the InMoov by Gael Langevin. It will be able to manipulate the physical environment a bit, but it’s not going to be good at it. Instead it looks elegant. It is a roughly human-sized humanoid robot and is able to gesture while communicating. We are giving it some extra capabilities by adding to the original design.

Attribution: Futurice, CC BY 4.0

This is not a robot for cutting the lawn, baking brownies, or strangling your enemies in their sleep. This is a protocol droid for communication. The majority of the robots today are sophisticated tools, not synthetic creatures. We want to make a creature.

Why

Our business is to design and implement systems that people love to use. With this project we are building our vision for social robots of the future. We’ll use this robot to study human-robot interaction in practise.

Theory has it that interacting with a robot capable of following social behaviour – capable of behaving in a socially competent manner – will evoke some social machinery within us, change the way we communicate. We may have more patience, even empathy.

The robot is a special user interface to a software system. As such it has some harsh limitations, but it also provides some interesting new opportunities, such as supporting familiar social cues in communication. One obvious application is a chatbot, but with social intelligence and the ability to properly express it.

How

As Gael, the designer of the robot, has been awesome and provided an open source blueprint for an InMoov robot, all we had to do is to 3D print the chassis, source the electronics, put it all together, and start programming away. Easy as pie! Or so we thought.

Turns out it’s still a hell of a lot of work. Mechanical engineering is challenging when you don’t really know what you’re doing. Servos burn. Large 3D prints may crack, finer ones, like gears, need to be filed and sanded to perfection after printing.

Attribution: Futurice, CC BY 4.0

It is not obvious which material you should choose. First we printed the robot’s hands with a LulzBot TAZ 6 printer in ABS plastic, then looking for better performance we redid them in nGen co-polyesters filament. Now we’re trying FormLabs tough resin on a stereolithographic 3D printer to see if they could still be improved. That’s liquid synthetic organic polymer solidified by laser. Using nylon would also be an option.

Attribution: Digitalents Helsinki, CC BY 4.0

In this endeavour Aalto University student groups have assisted us. They have extended the robot’s senses and studied how to control it with telepresence. This is very valuable work.

Now

We are finalising the assembling and testing some improvements, such as more expensive servos for moving the fingers with a better precision and less noise. We are also diving deep into the two most promising open source programming libraries for this robot; MyRobotLab and Robot Operating System.

We have done early tests with the gestures. Now we’ll look at creating an API for them, to make commanding the robot more accessible to others, developers and designers alike. Then finally we get to the truly interesting part; interaction.

Soon we can unleash our creatives on the robot, and witness miracles. Or tears of despair.

Future

Ultimately we want to make social robots smart by applying machine learning.

Basic stuff like machine vision face recognition is already there, but it will be very interesting to learn to communicate better, by studying and analysing the responses and reactions of people.

We might try real-time sentiment analysis of the communication, combined with interpreting human emotions from the postures and gestures. Voice stress analysis might also be useful, or emotion detection based on facial gestures. The system using the robot as an interface could then try to adapt and improve its communication in general, and perhaps also based on individual preferences, like we people do.

There are some social robots available for purchase that can be used for this type of research, such as SoftBank's Pepper, but unfortunately we haven't been able to find one that is suitably open source. We need to be able to really understand what is going on under the hood, and as we want to share our improvements, closed source is a dealbreaker.

Social robots may bring forth a significant improvement in the quality of life for people who need care, have challenges learning, or communicating with other people. They will be learning, adapting, and endlessly patient.

No attribution needed, Public Domain, CC0

As usual, we are more than happy to cooperate with researchers, and we will share all our findings and other valuable outcomes of our work as open source!

Teemu TurunenCorporate Hippie

Teemu TurunenCorporate Hippie