What’s next for augmented and virtual reality? (Part 2)

In the [previous post](http://futurice.com/blog/augmented-and-virtual-reality-part-1) about the next 2 to 5 years in the development of AR and VR, we looked at size, power and space. In second part - as we explore whether the technology is finally mature enough to have a mainstream impact - we look at the issues on a more detailed level: portability, control and touch.

The untethered experience

One of the more unpleasant parts of the high end VR experience (in addition to nausea and suddenly losing your feet under you) is the cords that trail behind you, causing you to stumble and reminding you are still firmly attached to this world. In AR, the cords will be overall less common (mainly justified by much higher end experience as Meta 2 is trying to do). However, VR has more challenges to overcome and slightly less to gain, meaning getting rid of the cords is closer to 5 year than 2 year timeline.

Untethered experience is undeniably the final destination in the development of VR. Current attempts to squeeze the device into portable format are at the same time amusing and intriguing. Already many manufacturers are attempting to emulate the high(er) end experience without external computers. However, as we are still improving the experience itself, it will take probably closer to 5 years before we even can start squeezing in this high-end experience into portable formats.

Some challenges requiring even more power in the future include higher image quality and screen technology improvements. Or like Valve CEO Gabe Nevell puts it, "Headsets are going to get lighter, they're going to get smaller, the resolution is going to go up. These aren't speculative things -- You'll see the VR industry leapfrogging any other display technology. You'll start to see that happening in 2018 and 2019 when you'll be talking about incredibly high resolutions."

Next up, then is the hot topic of simply getting rid of the cords and transmitting the data wirelessly. This is a lot more difficult than it sounds, particularly as you cannot compress the video stream as it needs to be real-time in order to respond to user actions.

Two HTC Vive partners have announced to have a wireless solution at CES 2017: TPCAST and Intel. TPCAST has promised to deliver an affordable solution in Q2; Intel was even more secretive and simply stated it's solution will use WiGig, which allowes the devices to communicate wirelessly with multi-gigabit speeds. Intel does have a prototype though that was shown off at MWC.

Quark VR has been experimenting with cutting cords off HTC Vive as well. Their pre-existing product seems to stream data to devices similar to the way Unity Remote does. In their future wireless solution, they claim to use WiFi to send the signals with a simple add-on on the device. Achieving this might require quite a lot of trickery, especially with issues with latency and the need for an extremely high speed wifi. Quark has told it is working on some final issues but it remains to be seen when this product is market ready. There's no news for actual product yet though they initially promised one by the end of 2016.

MIT labs has approached this from a different angle in their MoVR project, checking how to use less-used high frequency radio signals (millimeter waves). Mm waves can transfer a lot of information and are currently underused, though there is a lot of research on that front in many other domains as well.

There are issues with this approach, particularly that the signal is easily blocked, which means that the movement of the user needs to be calculated and compensated on the fly. MoVR’s biggest idea is in fact circumventing this problem in reconfiguring the device to reflect the signals in novel ways so they would always reach the receiver, even when at odd angles. In the long term this would enable multiple users in the same space without signal blocking or interference. MIT researches claim you can’t stream multiview-video on modern wifi, which means Quark is in an interesting position to prove their solution.

Portable VR devices

Another way to approach the untethered experience is to simply squeeze the device into a (semi)portable format, like Microsoft has done in the AR side with their HoloLens.

Facebook’s “Santa Cruz” prototype, revealed at the end of 2016, is a cross between Samsung’s mobile-based Gear VR and their flagship product Oculus Rift. This prototype is not to be mixed with the experience on high end device backed by juicy computer, whether that is using wireless or tethered connection. Santa Cruz has no set deadline for publishing yet, which tells how far yet are from even medium portable experience. There are important points in the product though, such as an additional 4 cameras to track the world around you to avoid collisions. With this kind of portable VR, we’ll start to see more VR accommodating to the real world and also real world injected in the virtual one.

Intel has promised to have their Project Alloy prototype by Q4/2017. Qualcomm has promised a VRDK in Q2/2017 using their Snapdragon processors and standalone headset. I would not hold my breath yet but in couple years we'll definitely see interesting entrances in the mid-range standalone devices.

In the 5 year span, the most powerful experiences will still benefit from the combination of wireless transfer and powerful non-portable computer while squeezing the headset to the bare minimum. But keep in mind that the mainstream users, the mythical “billion” will most likely never use that. They’ll make do with their mobiles - and in a couple years with those slightly better fully portable standalone devices that Facebook and its competitors are pushing.

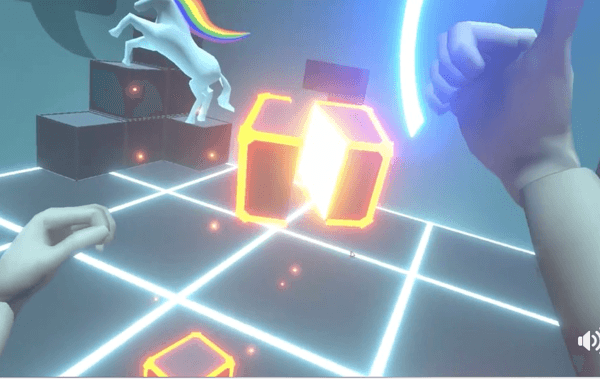

Getting control

Finding your way and interacting with the new environment is one of the key challenges in the virtual and augmented realm. In the short term, hand controllers will be the norm. Specialized controllers that are either visible in your virtual world, blinking and showing options (Vive) or controllers that can mimic your hand movements (Oculus Touch) will be used for several years.

In the long term, the physical controllers will diversify and generic ones will disappear. Most interactions will be done with gestures and voice and the physical controllers will take place where it most makes sense: when we want a device resembling its real-world counterpart, or an imaginary physical device in the virtual world. Examples of these specialized controllers are things like steering wheels and snowboard simulators for driving and sports applications, or magic wands for that virtualized MMORPG where you will be the mightiest wizard of them all.

We are already exploring new ways of interaction also here at Futurice's FutuLabs: using your hands with LeapMotion and Oculus Rift, and occasionally donning a pair of classic Reino slippers with controllers attached to get a more accurate full-body movement.

Haptic feedback

When I first used the Oculus Touch controllers, there was one particular moment that captured my attention. The way the physical controller maps your hands to their virtual counterparts fels surprisingly real and makes the virtual space feel that much more realistic. However, the illusion broke at the beginning of the Oculus Tutorial: I was supposed to lift my index finger and press down a virtual lid. That is exactly what I did, only to discover it feels very weird not having any sensation of actually touching the lid as my virtual hand pushed it.

Haptic feedback is still a little further ahead, but it is something we will crave and will ultimately use. Some companies like Teslasuit are going for a full-body suit, or less ambitious Hands Omni glove but this is not something mainstream users will do. The only way for this kind of approach to hit the mainstream usage in the future is as our normal clothes evolve towards more intelligent materials.

Prior to that, we’ll be happy to start with simple tactile experiences. There is a range of different approaches which utilize different technologies. It does seem then that we won’t have any single solution soon, rather a range of different feedback systems suitable for different scenarios.

Ultrahaptics uses ultrasound to create a feeling of touch. Tactical Haptics, on the other hand, represents the simpler solution: adding haptic feedback to gaming controllers. We can expect this kind of feedback in most controllers in near future.

Conclusion

Virtual and augmented reality have come a long way from the earliest clumsy attempts. Now the technology, while still only showing a fraction of its potential, is finally mature enough for to hint at mainstream success.

Until now it has used many existing components such as mobile screens. Now we see a turn where VR and AR start to push and drive the development of some of these technologies instead of just utilizing existing solutions. Screen technology is a good example.

Mainstream adaptation of VR and AR will be stealthier and be centered around mobile usage over the next few years. The adoption of additional gadgets, such as glasses, depends on killer apps and their ability to blend in. AR will seep slowly into people's everyday life while more comprehensive solutions will be explored and adopted by different industries to help in diverse fields from manufacturing to construction, maintenance and so forth.

- Kirsi LouhelainenSenior Consultant, FutuLabs Lead